- Emergent Computation

- Anticipatory Systems

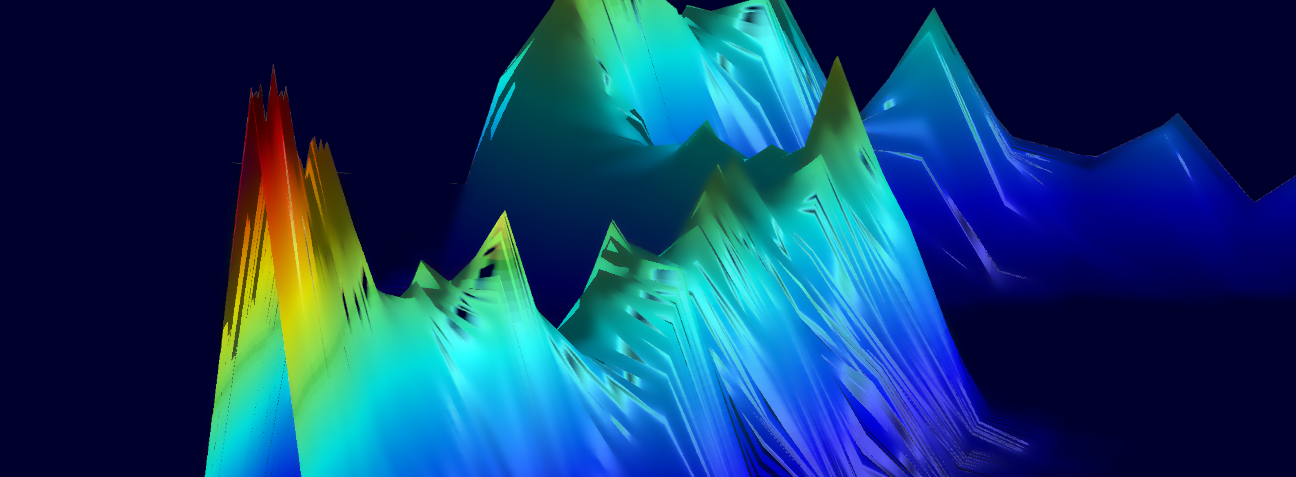

- Neuromorphic Computing

- Functional Programming

- Self-Organization

HRL Laboratories, LLC

CESPA: Center for the Ecological Study of Perception and Action.

In general, I like to solve interesting problems. Problems are interesting when they relate to other problems, ask for elegant solutions, and have a chance to extend our way of thinking. Not every problem can be that interesting, of course; just making something work, especially if it seems like it shouldn't or can't, is rewarding in itself.

What is intelligence, and how does it appear out of a lump of matter? These questions underlie a number of disciplines, including experimental psychology, artificial intelligence, and the philosophy of mind. In my opinion these disciplines are more connected than they are currently treated, and more collaboration between fields is needed to crack the hard problems that they each deal with. Several times, I have seen a room of very smart people discussing a problem that end up rehashing solutions that were introduced in the 19th, 18th, or even 17th century. I feel the road to understanding intelligence (and also defining it) is to find the right formalism to encapsulate the larger physics of life. My other interests can be seen as candidates for that formalism.

Building an intelligent system, which I would argue is necessarily complex, as an intelligent system may be intractable. Biology itself chooses not to do so! All existing intelligent systems start from a seed and grow into something we call intelligent. If we are to build our own intelligent systems, we may have to follow this same trajectory.

As such, one of the key problems to be solved is how to construct a seed that, when embedded in the appropriate environment, grows into something with desired properties. Put another way, how can you predict and control the end behavior of complex, potentially (probably) chaotic dynamcial systems?

In emergent computation, this means setting up an initial state plus dynamic such that when you hit play, your desired function is computed. In the more general field of self-organization, this means being able to know, and control by design, what a self-organizing system organizes into. One of the key tenets here is that the system in question "does what it normally does," but it has been put in an initial state, and placed into an environment, such that what it normally does ends up being what you want it to do.

For intelligent systems that are embodied and embedded in an environment (which, by the way, are all the ones we know), I believe that the above problems are exemplified by and potentially solved by combined perception and action. Treating perception and action as inseperable does quite a few things.

It forces us to deal in the world of complex systems, specifically ones which have some self-defining property. Such properties are the basis for many if not all of the really juicy paradoxes, which can almost always be boiled down to creating a class with some bifurcating property, such that a member of the class participates in defining the property. The Liar of Epimenidies, The Barber Paradox, Haugeland's Paradox of Mechanical Reason, Plato's Paradox of the Meno, Gödel's Incompleteness, etc., etc. In all of these cases it is some dualism that forces the paradox.

Treating perception and action as a dualism, like mind and body, invites these paradoxes. Keeping them together invites new methods.

Washburn, A., Kallen, R. W., Lamb, M., Stepp, N., Shockley, K., & Richardson, M. J. (2019). Feedback delays can enhance anticipatory synchronization in human-machine interaction. PloS one, 14(8), e0221275.

Stepp, N. & Jammalamadaka, A. (2018). A Dynamical Systems Approach to Neuromorphic Computation of Conditional Probabilities. In Proceedings of the International Conference on Neuromorphic Systems (ICONS '18). Association for Computing Machinery, New York, NY, USA, Article 7, 1–4.

Stepp, N. & Turvey, M. T. (2017). Anticipation in manual tracking with multiple delays. Journal of Experimental Psychology: Human Perception and Performance, 43(5), 914.

Salas, S. M., Patrick, R. J., Roach, S. M., Stepp, N. D., Cruz-Albrecht, J., Phillips, M. E., De Sapio, V., Lu, T., & Sritapan, V. (2017, April). Neuromorphic and Early Warning behavior-based authentication in common theft scenarios. In 2017 IEEE International Symposium on Technologies for Homeland Security (HST) (pp. 1-6). IEEE.

Voss, H. U., & Stepp, N. (2016). A negative group delay model for feedback-delayed manual tracking performance. Journal of computational neuroscience, 41(3), 295-304.

Srinivasa, N., Stepp, N., Cruz-Albrecht, J. (2015). Criticality as a Set-Point for Adaptive Behavior in Neuromorphic Hardware. Frontiers in Neuroscience, 9, 449.

Stepp, N., Plenz D. & Srinivasa, N. (2015). Synaptic Plasticity Enables Adaptive Self-Tuning Critical Networks. PLoS Computational Biology, 11.

Stepp, N. & Turvey, M.T. (2015). The Muddle of Anticipation. Ecological Psychology, 27, 103-126.

Stepp, N. & Srinivasa, N. (2012). A formal model for autocatakinetic systems. Ecological Psychology, 24, 204-219.

Moreno, M., Stepp, N. & Turvey, M. T. (2011). Whole body lexical decision. Neuroscience Letters, 490, 126-129.

Stepp, N., Chemero, A. & Turvey, M. T. (2011). Philosophy for the Rest of Cognitive Science. Topics in Cognitive Science, 3, 425-437.

Stepp, N. & Turvey, M. T. (2010). On Strong Anticipation. Cognitive Systems Research, 11, 148-164.

Stepp, N. (2009). Anticipation in feedback-delayed manual tracking of a chaotic oscillator. Experimental Brain Research, 198, 521-525.

Stepp, N. & Frank, T. D. (2009). A data-analysis method for decomposing synchronization variability of anticipatory systems into stochastic and deterministic components. The European Physical Journal B, 67(2), 251-257.

Stepp, N. & Turvey, M. T. (2008). Anticipating synchronization as an alternative to the internal model. Behavioral and Brain Sciences, Cambridge Univ Press, 31(2), 216-217.

Stephen, D. G., Stepp, N., Dixon, J. A., & Turvey, M.T. (2008) Strong anticipation: Sensitivity to long-range correlations in synchronization behavior. Physica A, 387(21), 5271-5278.

De Sapio, Vincent, et al. "System for continuous validation and threat protection of mobile applications." U.S. Patent No. 10,986,113. 20 Apr. 2021.

Jiang, Qin, et al. "System and method for synthetic aperture radar target recognition utilizing spiking neuromorphic networks." U.S. Patent No. 10,976,429. 13 Apr. 2021.

Patrick, Richard J., et al. "Neuromorphic system for authorized user detection." U.S. Patent No. 10,902,115. 26 Jan. 2021.

Martin, Charles E., et al. "Method and system for detecting change of context in video streams." U.S. Patent No. 10,878,276. 29 Dec. 2020.

Huber, David J., Nigel D. Stepp, and Tsai-Ching Lu. "Aircraft maintenance message prediction." U.S. Patent No. 10,787,278. 29 Sep. 2020.

Stepp, Nigel D., David J. Huber, and Tsai-Ching Lu. "System of structured argumentation for asynchronous collaboration and machine-based arbitration." U.S. Patent Application No. 16/724,130.

Jammalamadaka, Aruna, and Nigel D. Stepp. "Neuronal network topology for computing conditional probabilities." U.S. Patent No. 10,748,063. 18 Aug. 2020.

Huber, David J., et al. "System and method for human-machine hybrid prediction of events." U.S. Patent Application No. 16/708,166.

Stepp, Nigel D., and Aruna Jammalamadaka. "Network composition module for a bayesian neuromorphic compiler." U.S. Patent Application No. 16/792,791.

Skorheim, Steven W., Nigel D. Stepp, and Ruggero Scorcioni. "Artificial neural networks having competitive reward modulated spike time dependent plasticity and methods of training the same." U.S. Patent Application No. 16/661,637.

Chang, Hao-yuan, Aruna Jammalamadaka, and Nigel D. Stepp. "Spiking neural network for probabilistic computation." U.S. Patent Application No. 16/577,908.

Stepp, Nigel D., and Aruna Jammalamadaka. "Programming model for a bayesian neuromorphic compiler." U.S. Patent Application No. 16/294,886.

Pilly, Praveen K., Nigel D. Stepp, and Narayan Srinivasa. "Sparse inference modules for deep learning." U.S. Patent Application No. 15/079,899.